Home > Online Image Generators > DALL-E 3

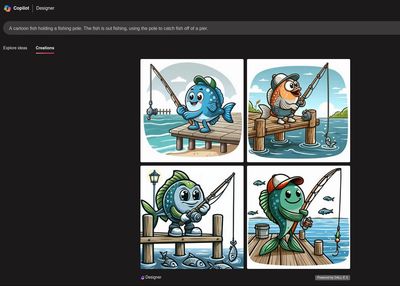

Screenshot with image generated in DALL-E 3.

Using DALL-E 3

Thanks to OpenAI's partnership with Microsoft, DALL-E 3 is available to use for free in several different places:

- Bing's Image Creator (Microsoft Designer) offers the most direct way to create DALL-E 3 images. You'll need a Microsoft account, but signing up for one is free. You're given 15 credits for free every day. Each credit will generate 4 images from a single prompt. If you run out of credits, you can still generate unlimited images, but they will generate more slowly.

- You can use DALL-E 3 from within ChatGPT. A free way to get access ChatGPT is to use Microsoft Copilot (Again, you'll need a Microsoft account.) Instead of typing a question, you can just type "Draw me a picture of" followed by the prompt you'd like to see, and DALL-E 3 will begin generating what you asked for.

History

DALL-E is the granddaddy of other AI image generators. The original DALL-E was introduced by OpenAI on January 1, 2021, as a "multimodal" variant on GPT-3, trained to reply to text with images, instead of replying to text with text. The name was supposed to evoke the name of the artist Salvador Dalí and Pixar's animated robot WALL-E.

OpenAI made an ongoing deal with stock photography company ShutterStock, allowing DALL-E versions to be trained on millions of high-resolution images and accompanying metadata. DALL-E 2 followed in 2022, with much better image quality and prompt adherence, and gained far more users. When DALL-E 3 was released, it was integrated with ChatGPT, making it more powerful and more widely accessible.

Prompt Adherence

DALLE-3 is great at prompt adherence. When I give it a prompt such as:

- A black spider made entirely out of yarn, with red eyes, crawling on a spider web made of green yarn, in a dark old room.

DALL-E 3 will consistently follow all of it, making a spider out of black yarn, making the eyes red, and making the web green. This stands in sharp contrast to many other models. Working in SDXL, for example, if you mention a color in a prompt, it's common that several things with receive that color, not just the object you specified. DALL-E was so good at this kind of prompt that I had fun asking it to create more elaborate, life-sized yarn sculptures, shown in the album above.

For some far-out concepts, DALL-E improved when I asked it for an illustration or cartoon. For example:

- A fish holding a fishing pole. The fish is out fishing, trying to catch fish off a pier.

This prompt by itself tended to produce human fishermen, but everything changed when I specified "a cartoon fish." What DALL-E couldn't imagine as a realistic photo, it easily generated as a cartoon.

Content Filters

DALL-E 3 scans prompts for potentially objectionable material, to prevent anyone from using it to generate nudity, sexualized images, or violent images. From my experience it seems that OpenAI has tuned their filter to err on the side of being overly cautious. However, if there wasn't anything objectionable about a prompt, sometimes just resubmitting with a few words changed, or trying the original prompt again on another day, can turn a rejected prompt into an acceptable one.

Image Output

All of the freely generated images are square, with a resolution of 1024 x 1024 pixels (more about aspect ratio choices below.) When initially displayed on the screen, some of them appear to have a logo or watermark in the lower left corner, but when I used the "Download" button, the image I downloaded were clean, with no logo.

Advanced functions in DALL-E 3

DALL-E 3 also has a number of "hidden" options which are not shown when using it for free in Designer or Copilot, but which are documented as being available in the API (the interface that programmers use to interact with DALL-E 3.) The extra functions include:

- A choice of 1024 x 1024 (square), 1024 x 1792 (portrait) or 1792 x 1024 (widescreen or landscape) images.

- A choice of image styles between "vivid" or "natural."

- A choice of quality levels, between "standard" and "hd."

Moving up from the free usage to paid subscription to ChatGPT can include the wide-screen images. I searched around to see where I could gain access to even more API options, and it turns out that you can also use all of these DALL-E 3 functions through a service called NightCafe. I have given NightCafe a separate review.

Copyright © 2024-2025 by Jeremy Birn

This website uses cookies.

Welcome to the Internet! Websites use cookies to analyze traffic and optimize performance. By accepting cookies, data will be aggregated with all other user data.